Please provide information about the role being hired for.

The integrity layer for AI systems

Verify, trace and validate AI pipelines directly inside your IDE against real data, not assumptions.

Faster debugging

Fewer model failures

Automated documentation

THE PROBLEM

AI failures don’t start where they show up.

When something breaks in an AI system, the symptom appears in production. But the root cause is usually buried upstream. A dataframe was silently reshaped. A merge introduced duplicates three steps back. An AI coding assistant hallucinated a plausible transformation that broke downstream logic.

The problem isn’t monitoring. It’s visibility.

It’s that nothing in the current toolchain captures how code and data actually interact across the full pipeline. When things fail, teams trace backwards manually, line by line, writing throwaway test code just to isolate the issue.

What’s missing isn’t more tools. It’s an integrity layer, a verification system that sits between the human and their AI assistants, ensuring that what’s built actually works, at every stage, against the real data.

THE MISSING LAYER

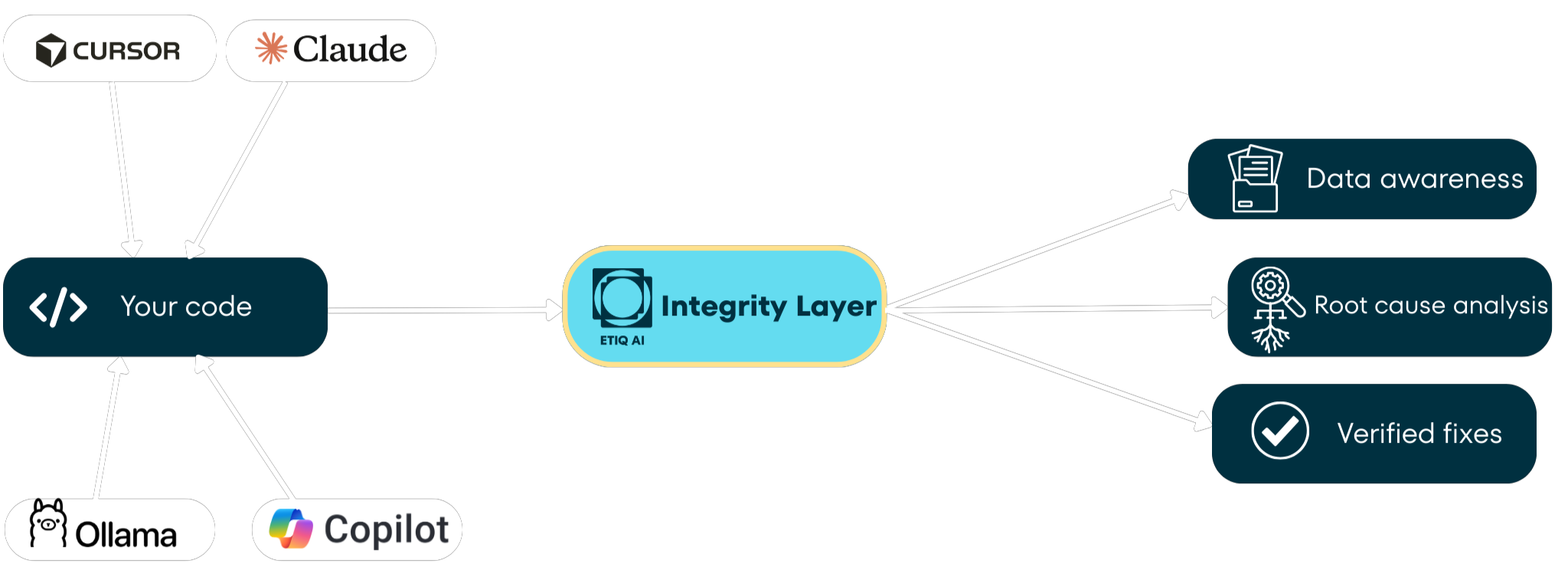

If AI writes the code, who verifies it?

Modern AI development introduced powerful assistants.

But it also introduced non-determinism.

Code is suggested, transformed, and recombined faster than humans can reason about it.

What’s missing isn’t another monitoring dashboard.

It’s a layer that continuously verifies how code and data interact at every step of the pipeline. That layer sits between the developer and the AI assistant.

It ensures what’s built actually works.

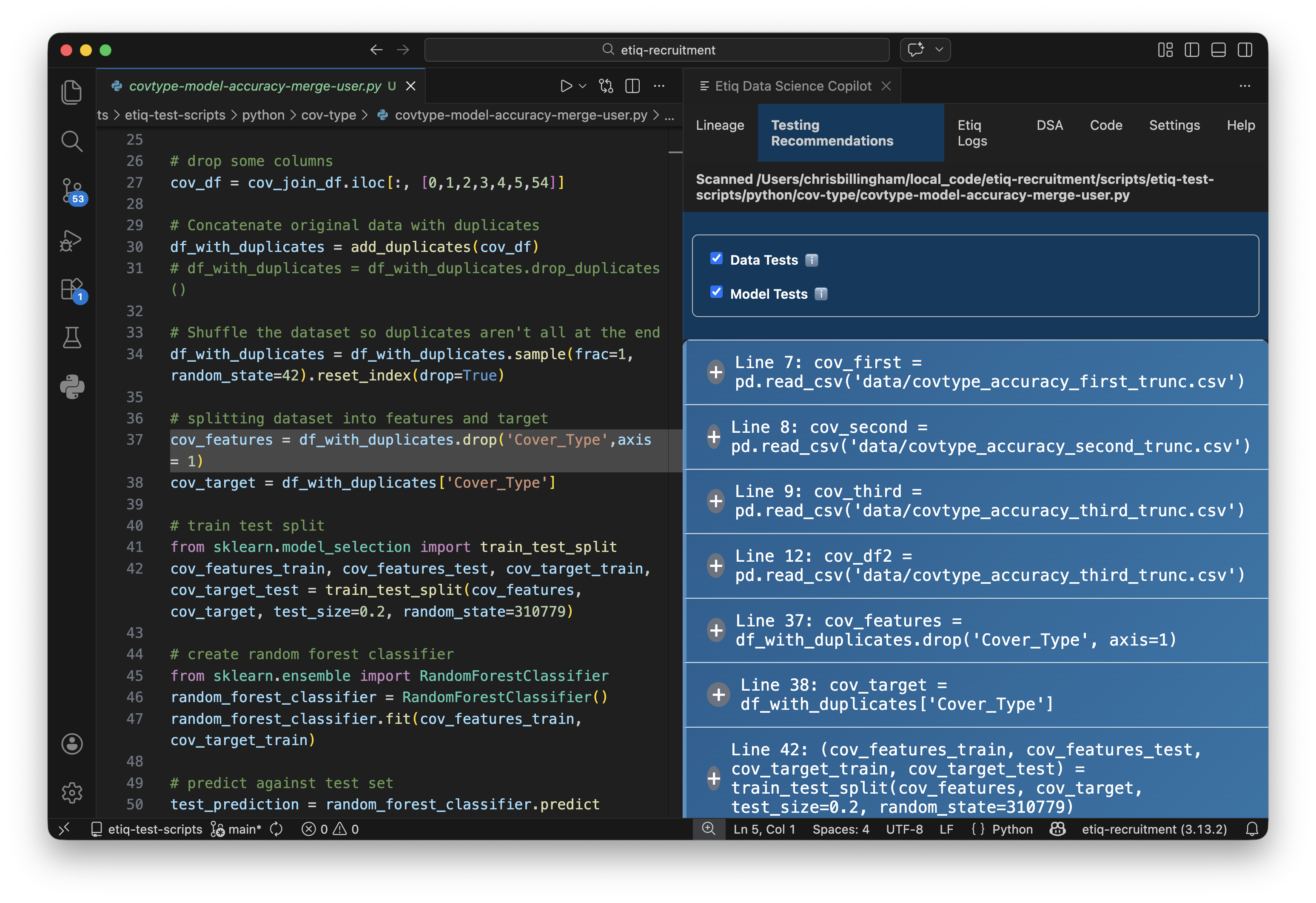

How Etiq Works

01

Install

Install the VS Code extension.

No new environment.

No workflow disruption.

02

Run

Scan your pipeline.

Etiq maps lineage and captures real data objects automatically

03

Verify

Run contextual tests, trace failures to root cause and apply verified fixes

This is the integrity layer in action

Etiq scans your code, captures every data object, and builds a live lineage network so you can test, trace, and verify what’s actually happening, not what you assume is happening

THE FOUNDATION

Etiq captures what every other tool ignores

The relationship between your code and your data mapped, captured, and held persistently at every stage of the pipeline.

Lineage Visualisation

Code is written as a linear sequence of lines. What's actually happening is far more complex: data splits, merges, gets transformed in parallel paths, and recombines.

Etiq scans your Python script and builds a visual network diagram mapping the flow between every data object and code function. Even a simple 60-line script produces a surprisingly complex graph. At 300 or 3,000 lines across multiple files, it becomes essential.

Data Object Capture

Normally in Python, data only exists while the script runs. Once it finishes, everything disappears. If you want to inspect a data frame at line 35, you write extra code.

Etiq captures a copy of every data object at every point in the pipeline and holds it persistently. Every test runs against actual captured data, not assumptions. This is what makes real verification possible.

WHAT YOU CAN DO

Test, trace, fix, and document without writing extra code

Four capabilities that replace hours of manual work with a single click.

01 — Test

Automated Test Recommendations

At every point in your code, Etiq recommends the right tests: data quality, distribution, sparsity, missing values, duplicates, outliers, and model performance. Tests run from the side panel. No test code to write, no output to parse, no cleanup. The right test, at the right point, against real data, in one click.

02 — Trace

Root Cause Analysis

When a test fails, the Data Science Agent traces the failure back through the lineage network, following the actual data flow, not just line numbers. It tests at every upstream node until it finds where the issue originated, then shows you exactly which lines and data objects are affected.

03 — Fix

Verified Fixes

The agent suggests a targeted code fix at the precise point where the issue originated, then verifies that the fix actually works by re-running tests against the real data. A closed loop: identify, trace, fix, verify. Unlike AI coding assistants that suggest and hope, Etiq confirms.

04 — Document

One-Click Documentation

Etiq auto-generates structured documentation explaining your pipeline: data used, processing steps, transformations applied, model decisions. Exportable as PDF. For regulated industries where you need to explain why a pipeline works the way it does, this turns a week of documentation into a button press.

BUILT FOR YOU

Who is Etiq for?

From individual ML engineers to enterprise AI leaders, Etiq provides a verification layer that reduces risk, increases confidence, and standardises quality across every pipeline.

ML Engineers & Data Scientists

You build, debug, and ship pipelines.

✔ Visualise full data lineage across files

✔ Run contextual tests without writing test code

✔ Trace failures to the true root cause

Heads of AI & Engineering

You’re accountable for reliability, velocity, and risk.

✔ Standardised verification across teams

✔ Fewer production failures reaching later stages

✔ Governance-ready documentation on demand

Works where you work. Protects what matters.

No new environments. No workflow disruption. Up and running in minutes.

Inside Your IDE

Installs as a VS Code extension. Supports Cursor, Kiro, and the VS Code family, plus Jupyter Notebooks. No new environment to learn.

Local-First & Private

Core features work entirely offline. Sensitive data never leaves the machine. Built for regulated industries where privacy is non-negotiable.

LLM-Agnostic

Works with Azure, Gemini, Claude, Ollama, or any API-accessible model. Only minimal, relevant code is sent, reducing token cost and hallucination risk.